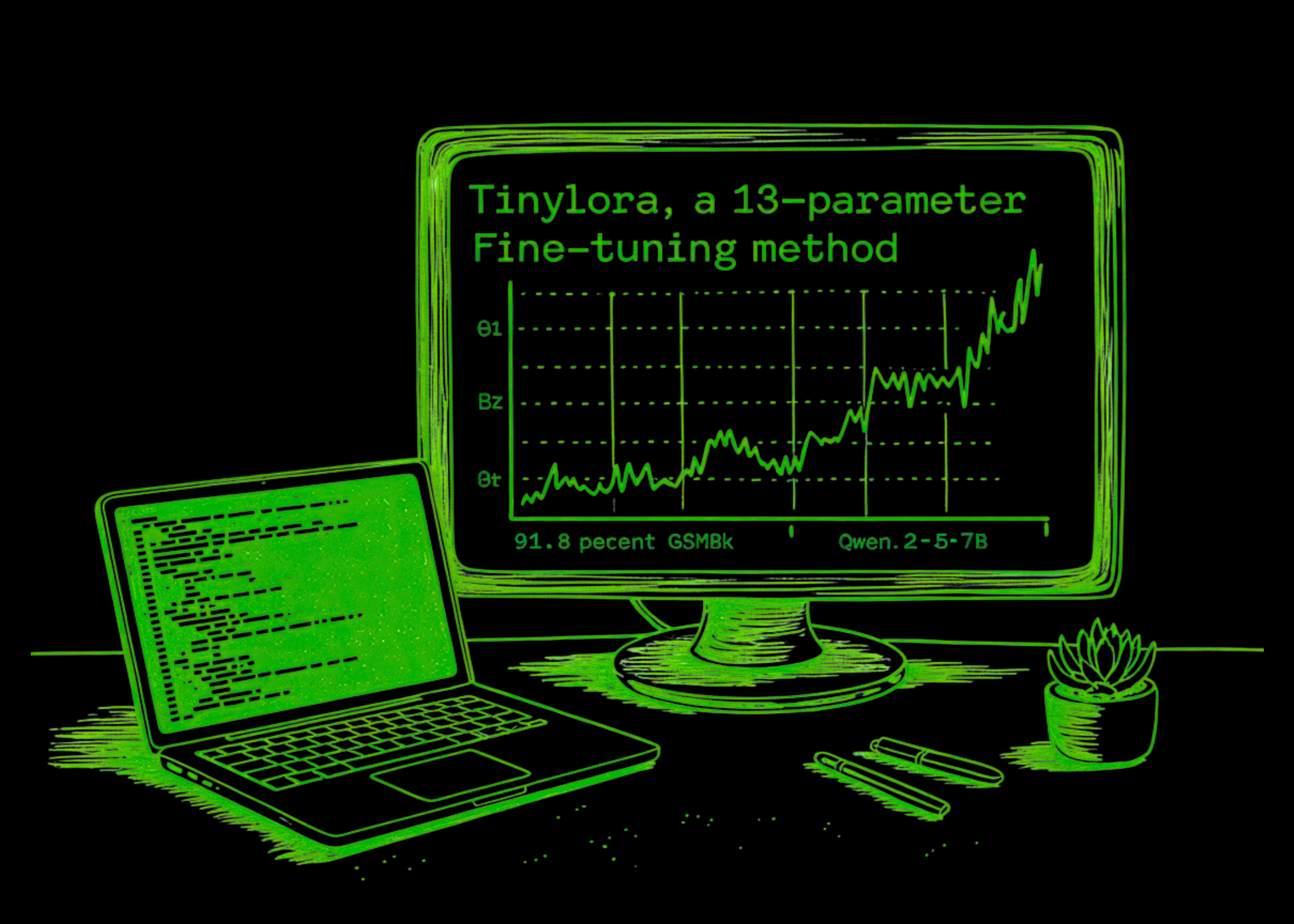

Researchers from FAIR at Meta, Cornell College, and Carnegie Mellon College have demonstrated that enormous language fashions (LLMs) can study to cause utilizing a remarkably small variety of skilled parameters. The analysis workforce introduces TinyLoRA, a parameterization that may scale right down to a single trainable parameter beneath excessive sharing settings. Utilizing this technique on a Qwen2.5-7B-Instruct spine, the analysis workforce achieved 91.8% accuracy on the GSM8K benchmark with solely 13 parameters, totaling simply 26 bytes in bf16.

Overcoming the Constraints of Commonplace LoRA

Commonplace Low-Rank Adaptation (LoRA) adapts a frozen linear layer W ∈ Rdxok utilizing trainable matrices A ∈ Rdxr and B ∈ Rrxok. The trainable parameter rely in commonplace LoRA nonetheless scales with layer width and rank, which leaves a nontrivial decrease sure even at rank 1. For a mannequin like Llama3-8B, this minimal replace dimension is roughly 3 million parameters.

TinyLoRA circumvents this by constructing upon LoRA-XS, which makes use of the truncated Singular Worth Decomposition (SVD) of frozen weights. Whereas LoRA-XS usually requires at the very least one parameter per tailored module, TinyLoRA replaces the trainable matrix with a low-dimensional trainable vector 𝜐 ∈ Ru projected via a set random tensor P ∈ Ruxrxr.

The replace rule is outlined as:

$$W’ = W + USigma(sum_{i=1}^{u}v_{i}P_{i})V^{high}$$

By making use of a weight tying issue (ntie), the entire trainable parameters scale as O(nmu/ntie), permitting updates to scale right down to a single parameter when all modules throughout all layers share the identical vector.

Reinforcement Studying: The Catalyst for Tiny Updates

A core discovering of the analysis is that Reinforcement Studying (RL) is essentially extra environment friendly than Supervised Finetuning (SFT) at extraordinarily low parameter counts. The analysis workforce reviews that fashions skilled through SFT require updates 100 to 1,000 instances bigger to achieve the identical efficiency as these skilled with RL.

This hole is attributed to the ‘data density’ of the coaching sign. SFT forces a mannequin to soak up many bits of knowledge—together with stylistic noise and irrelevant constructions of human demonstrations—as a result of its goal treats all tokens as equally informative. In distinction, RL (particularly Group Relative Coverage Optimization or GRPO) supplies a sparser however cleaner sign. As a result of rewards are binary (e.g., precise match for a math reply), reward-relevant options correlate with the sign whereas irrelevant variations cancel out via resampling.

Optimization Tips for Devs

The analysis workforce remoted a number of methods to maximise the effectivity of tiny updates:

- Optimum Frozen Rank (r): Evaluation confirmed {that a} frozen SVD rank of r=2 was optimum. Greater ranks launched too many levels of freedom, complicating the optimization of the small trainable vector.

- Tiling vs. Structured Sharing: The analysis workforce in contrast ‘structured’ sharing (modules of the identical sort share parameters) with ’tiling‘ (close by modules of comparable depth share parameters). Surprisingly, tiling was more practical, exhibiting no inherent profit to forcing parameter sharing solely between particular projections like Question or Key modules.

- Precision: In bit-constrained regimes, storing parameters in fp32 proved most performant bit-for-bit, even when accounting for its bigger footprint in comparison with bf16 or fp16.

Benchmark Efficiency

The analysis workforce reviews that Qwen-2.5 fashions usually wanted round 10x fewer up to date parameters than LLaMA-3 to achieve comparable efficiency of their setup.

| Mannequin | Parameters Skilled | GSM8K Go@1 |

| Qwen2.5-7B-Instruct (Base) | 0 | 88.2% |

| Qwen2.5-7B-Instruct | 1 | 82.0% |

| Qwen2.5-7B-Instruct | 13 | 91.8% |

| Qwen2.5-7B-Instruct | 196 | 92.2% |

| Qwen2.5-7B-Instruct (Full FT) | ~7.6 Billion | 91.7% |

On more durable benchmarks like MATH500 and AIME24, 196-parameter updates for Qwen2.5-7B-Instruct retained 87% of absolutely the efficiency enchancment of full finetuning throughout six troublesome math benchmarks.

Key Takeaways

- Excessive Parameter Effectivity: It’s attainable to coach a Qwen2.5-7B-Instruct mannequin to attain 91.8% accuracy on the GSM8K math benchmark utilizing solely 13 parameters (26 complete bytes).

- The RL Benefit: Reinforcement Studying (RL) is essentially extra environment friendly than Supervised Finetuning (SFT) in low-capacity regimes; SFT requires 100–1000x bigger updates to achieve the identical efficiency stage as RL.

- TinyLoRA Framework: The analysis workforce developed TinyLoRA, a brand new parameterization that makes use of weight tying and random projections to scale low-rank adapters right down to a single trainable parameter.

- Optimizing the “Micro-Replace”: For these tiny updates, fp32 precision is extra bit-efficient than half-precision codecs , and “tiling” (sharing parameters by mannequin depth) outperforms structured sharing by module sort.

- Scaling Tendencies: As fashions develop bigger, they develop into extra ‘programmable’ with fewer absolute parameters, suggesting that trillion-scale fashions might doubtlessly be tuned for advanced duties utilizing only a handful of bytes.

Take a look at the Paper. Additionally, be happy to observe us on Twitter and don’t overlook to affix our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.