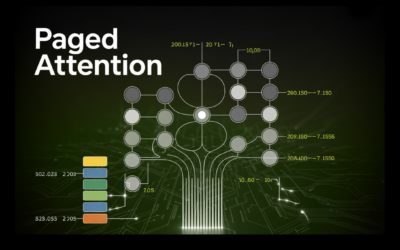

Paged Consideration in Giant Language Fashions LLMs

When working LLMs at scale, the actual limitation is GPU reminiscence relatively than compute, primarily as a result of every request requires a KV cache to retailer token-level knowledge. In conventional setups, a big mounted reminiscence block is reserved per request primarily based on the utmost sequence size, which results in important unused area and…