The Blake Lemoine incident is remembered at this time as a excessive‑water mark of AI hype. It thrust the entire concept of acutely aware AI into public consciousness for a information cycle or two, nevertheless it additionally launched a dialog, amongst each laptop scientists and consciousness researchers, that has solely intensified within the years since. Whereas the tech group continues to publicly belittle the entire concept (and poor Lemoine), in non-public it has begun to take the likelihood way more critically. A acutely aware AI may lack a transparent business rationale (how do you monetize the factor?) and create sticky ethical dilemmas (how ought to we deal with a machine able to struggling?). But some AI engineers have come to assume that the holy grail of synthetic common intelligence—a machine that isn’t solely supersmart but additionally endowed with a human stage of understanding, creativity, and customary sense—may require one thing like consciousness to realize. Within the tech group, what had been a casual taboo surrounding acutely aware AI—as a prospect that the general public would discover creepy—all of a sudden started to crumble.

The turning level got here in the summertime of 2023, when a bunch of 19 main laptop scientists and philosophers posted an 88‑web page report titled “Consciousness in Artificial Intelligence,” informally generally known as the Butlin report. Inside days, it appeared, everybody within the AI and consciousness science group had learn it. The draft report’s summary provided this arresting sentence: “Our evaluation means that no present AI techniques are acutely aware, but additionally means that there aren’t any apparent obstacles to constructing acutely aware AI techniques.”

The authors acknowledged that a part of the inspiration behind convening the group and writing the report was “the case of Blake Lemoine.” “If AIs can provide the impression of consciousness,” a coauthor told Science magazine, “that makes it an pressing precedence for scientists and philosophers to weigh in.”

However what caught everybody’s consideration was that single assertion within the summary of the preprint: “no apparent obstacles to constructing acutely aware AI techniques.” After I learn these phrases for the primary time, I felt like some essential threshold had been crossed, and it was not only a technological one. No, this needed to do with our very id as a species.

What wouldn’t it imply for humanity to find in the future within the not‑so‑distant future {that a} absolutely acutely aware machine had come into the world? I’m guessing it might be a Copernican second, abruptly dislodging our sense of centrality and specialness. We people have spent a couple of thousand years defining ourselves in opposition to the “lesser” animals. This has entailed denying animals such supposedly uniquely human traits as emotions (considered one of Descartes’s most flagrant errors), language, motive, and consciousness. In the previous couple of years, most of those distinctions have disintegrated as scientists have demonstrated that loads of species are clever and acutely aware, have emotions, and use language and instruments, within the course of difficult centuries of human exceptionalism. This shift, nonetheless underway, has raised thorny questions on our id, in addition to about our ethical obligations to different species.

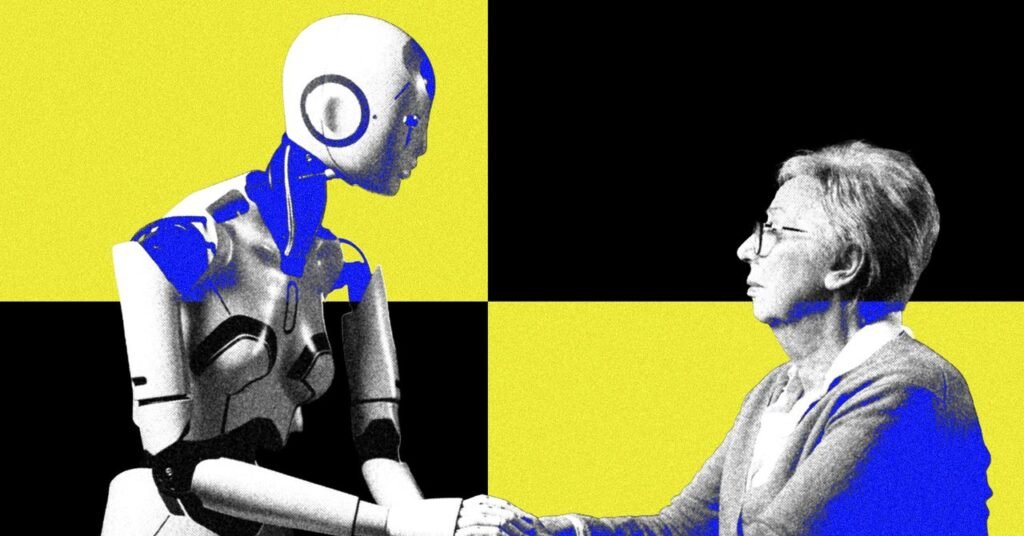

With AI, the risk to our exalted self‑conception comes from one other quarter solely. Now we people should outline ourselves in relation to AIs relatively than different animals. As laptop algorithms surpass us in sheer brainpower—handily beating us at video games like chess and Go and numerous types of “greater” thought like arithmetic—we are able to at the very least take solace in the truth that we (and plenty of different animal species) nonetheless must ourselves the blessings and burdens of consciousness, the power to really feel and have subjective experiences. On this sense, AI could function a typical adversary, drawing people and different animals nearer collectively: us towards it, the dwelling versus the machines. This new solidarity would make for a heartwarming story and is perhaps excellent news for the animals invited to affix Staff Acutely aware. However what occurs if AI begins to problem the human—or animal, I ought to say—monopoly on consciousness? Who will we be then?