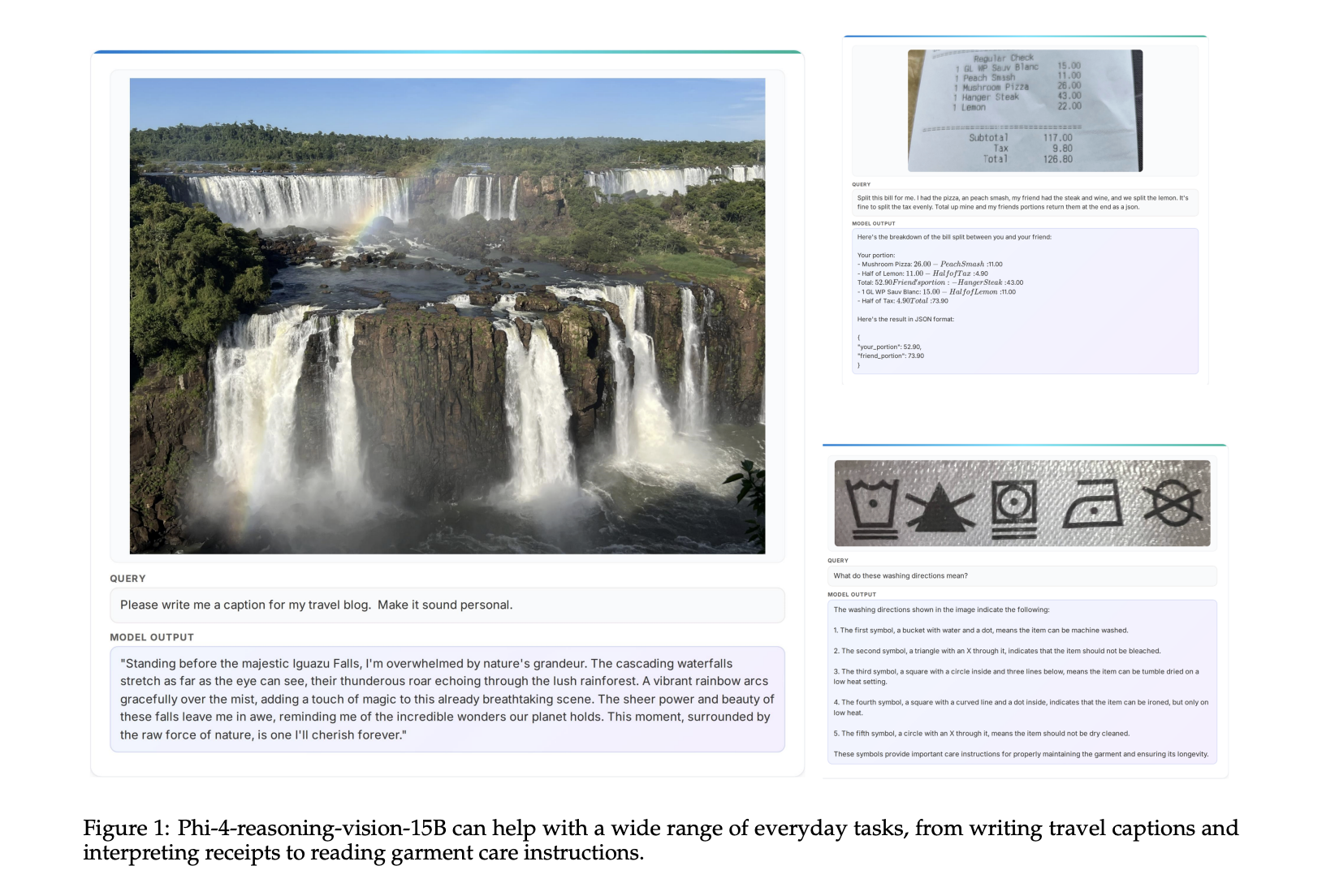

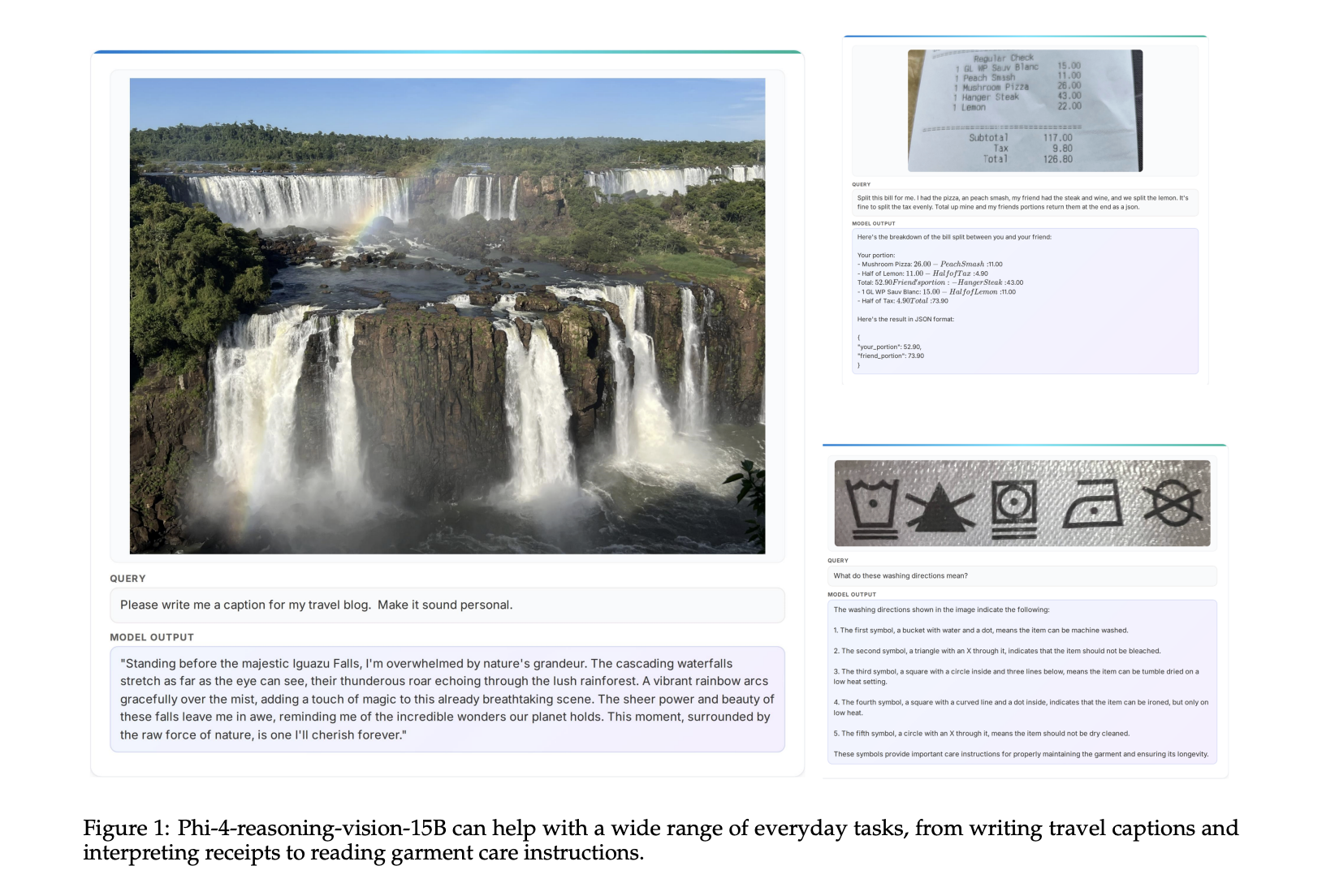

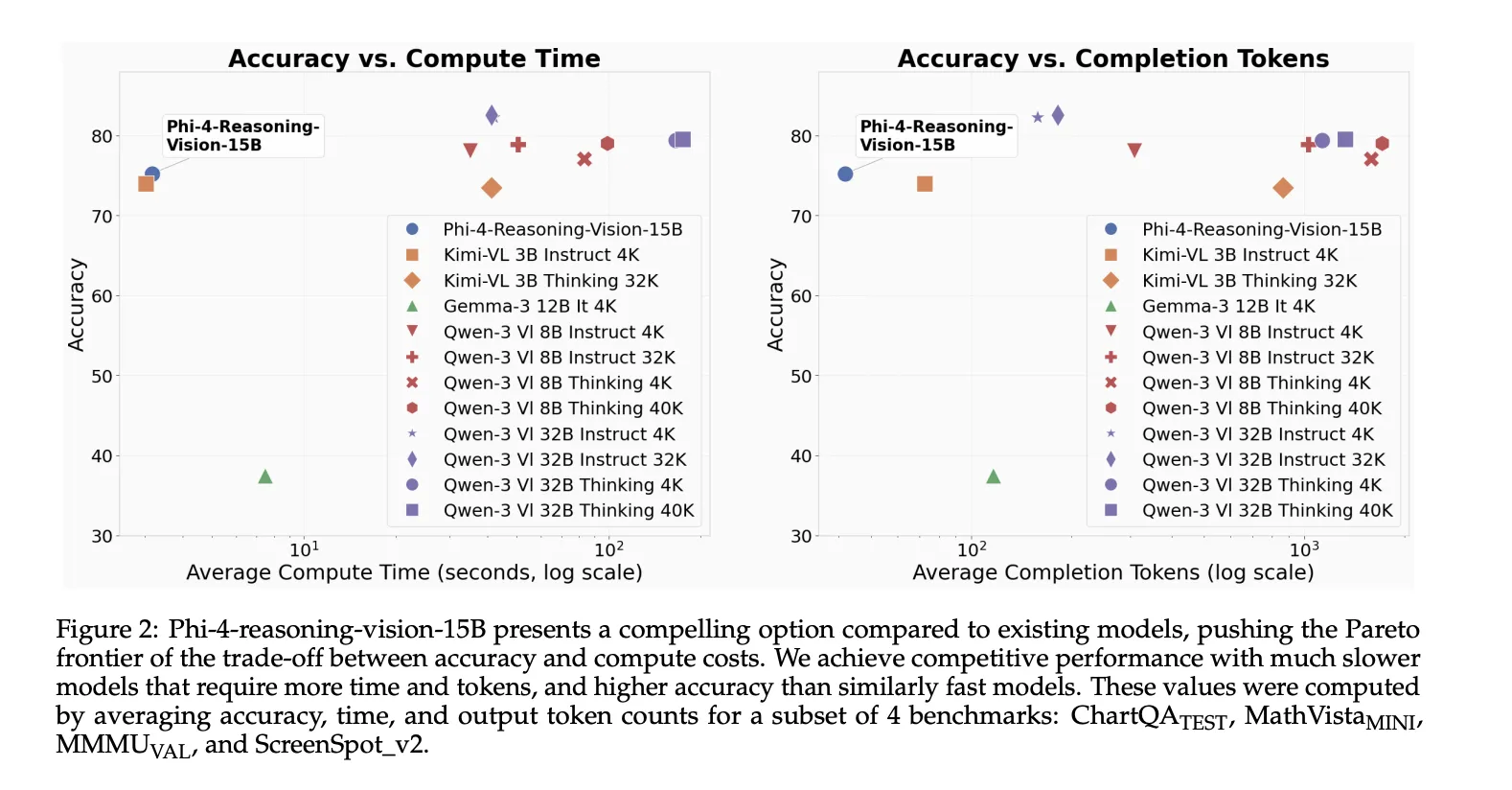

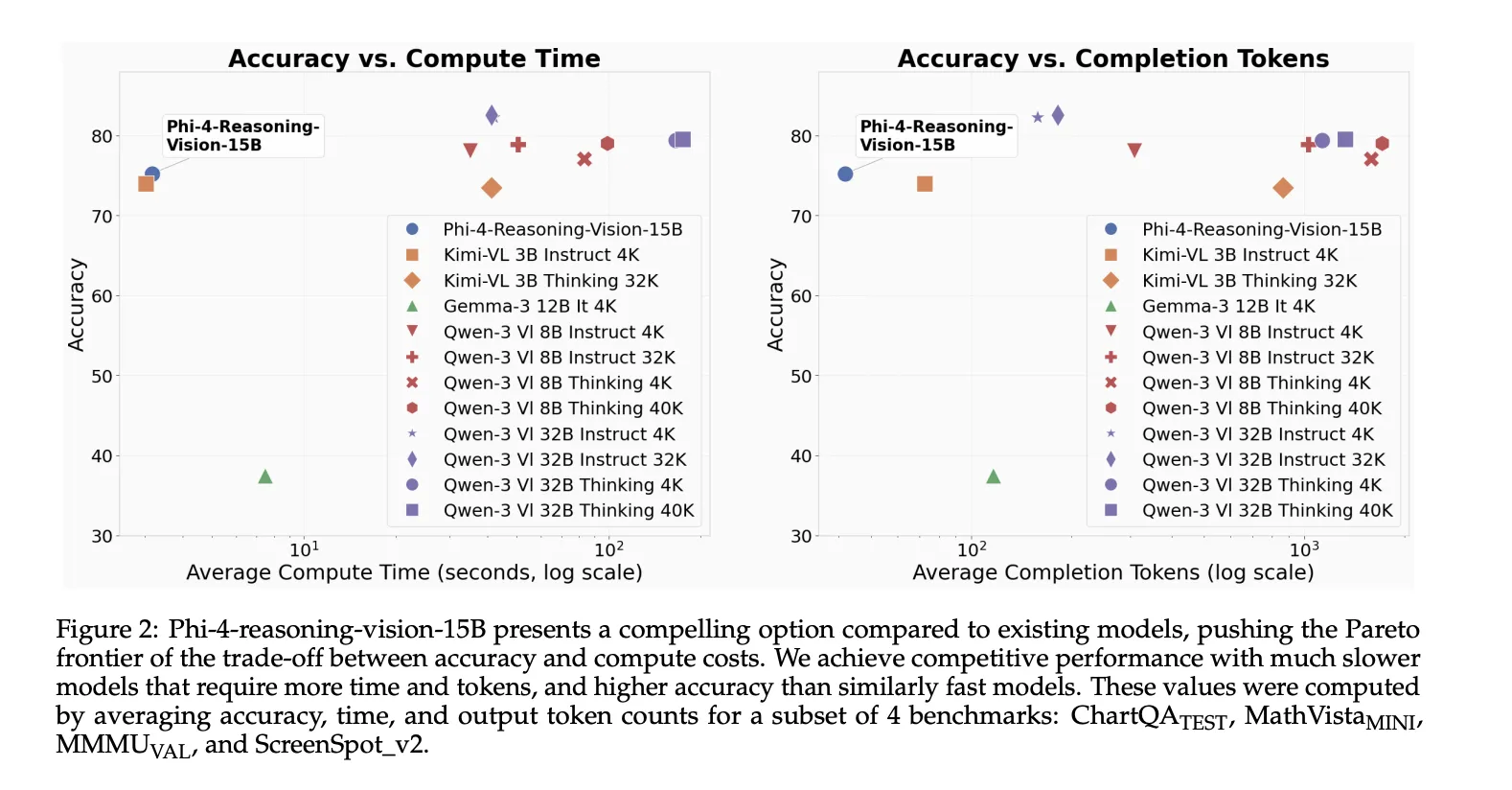

Microsoft has released Phi-4-reasoning-vision-15B, a 15 billion parameter open-weight multimodal reasoning model designed for image and text tasks that require both perception and selective reasoning. It is a compact model built to balance reasoning quality, compute efficiency, and training-data requirements, with particular strength in scientific and mathematical reasoning and understanding user interfaces.

What the model is built on?

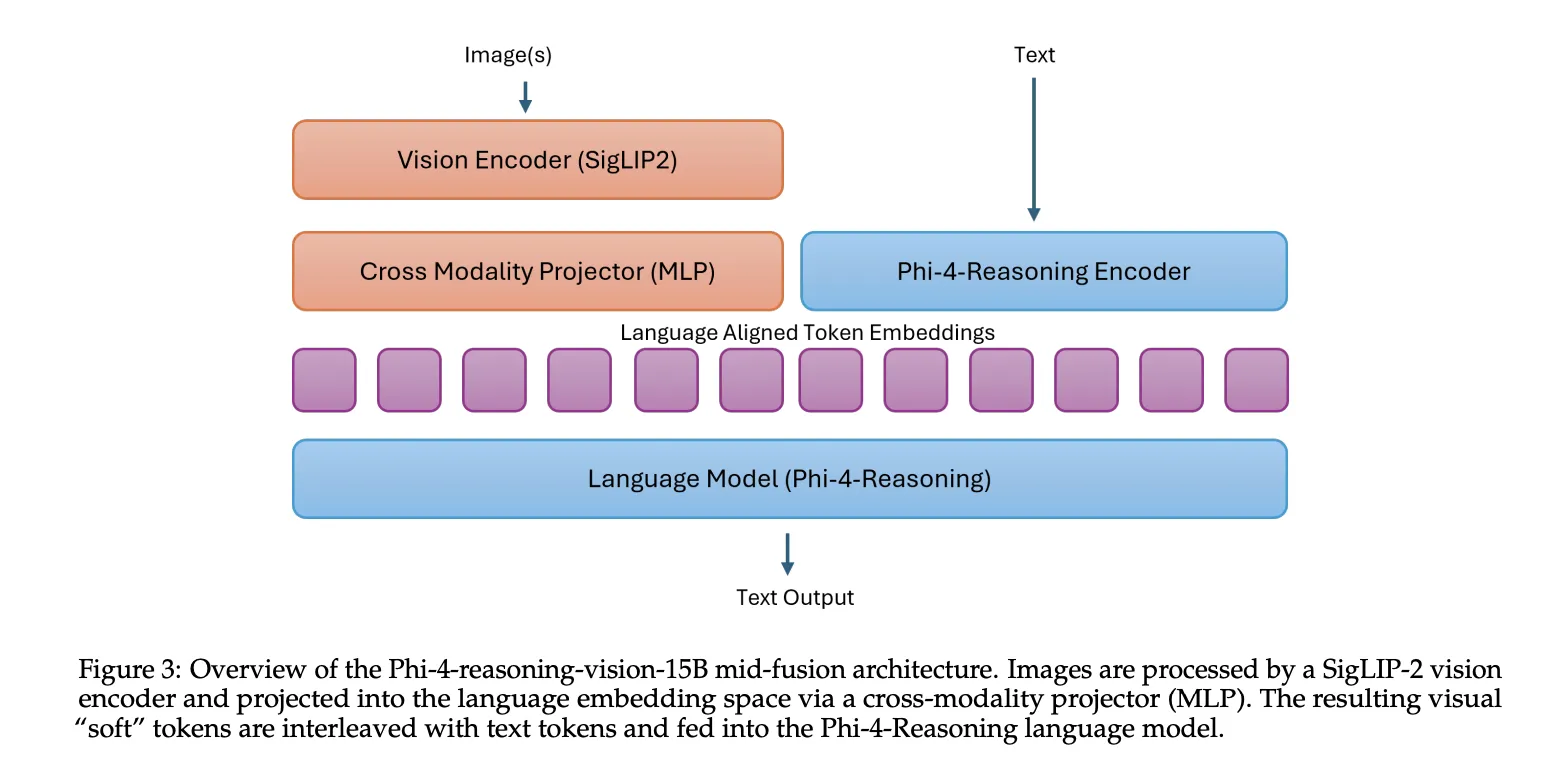

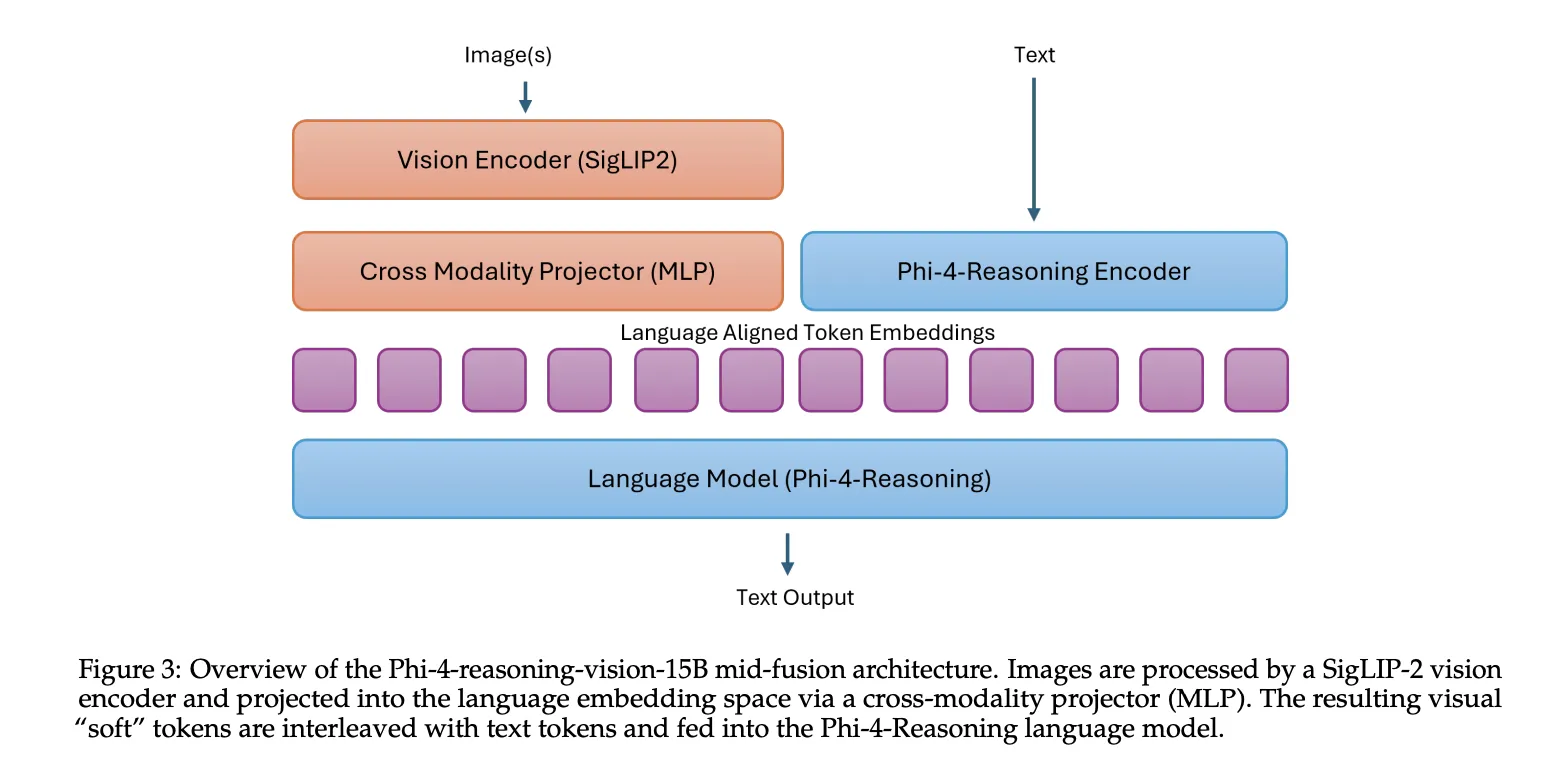

Phi-4-reasoning-vision-15B combines the Phi-4-Reasoning language backbone with the SigLIP-2 vision encoder using a mid-fusion architecture. In this setup, the vision encoder first converts images into visual tokens, then those tokens are projected into the language model embedding space and processed by the pretrained language model. This design acts as a practical trade-off: it preserves strong cross-modal reasoning while keeping training and inference costs manageable compared with heavier early-fusion designs.

Why Microsoft took the smaller-model route?

Many recent vision-language models have grown in parameter count and token usage, which raises both latency and deployment cost. Phi-4-reasoning-vision-15B was built as a smaller alternative that still handles common multimodal workloads without relying on extremely large training datasets or excessive inference-time token generation. The model was trained on 200 billion multimodal tokens, building on Phi-4-Reasoning, which was trained on 16 billion tokens, and ultimately on the Phi-4 base model, which was trained on 400 billion unique tokens. Microsoft contrasts that with the more than 1 trillion tokens used to train several recent multimodal models such as Qwen 2.5 VL, Qwen 3 VL, Kimi-VL, and Gemma 3.

High-resolution perception was a core design choice

Microsoft team explains one of the more useful technical lessons in their technical report that multimodal reasoning often fails because perception fails first. Models can miss the answer not because they lack reasoning ability, but because they fail to extract the relevant visual details from dense images such as screenshots, documents, or interfaces with small interactive elements.

Phi-4-reasoning-vision-15B uses a dynamic resolution vision encoder with up to 3,600 visual tokens, which is intended to support high-resolution understanding for tasks such as GUI grounding and fine-grained document analysis. The Microsoft team states that high-resolution, dynamic-resolution encoders yield consistent improvements, and explicitly notes that accurate perception is a prerequisite for high-quality reasoning.

Mixed reasoning instead of forcing reasoning everywhere

A second important design decision is the model’s mixed reasoning and non-reasoning training strategy. Rather than forcing chain-of-thought-style reasoning for all tasks, Microsoft team trained the model to switch between two modes. Reasoning samples include

The goal of this hybrid setup is to let the model respond directly on tasks where longer reasoning adds latency without improving accuracy, while still invoking structured reasoning on tasks such as math and science. Microsoft team also notes an important limitation: the boundary between these modes is learned implicitly, so switching is not always optimal. Users can override the default behavior through explicit prompting with

What areas are stronger?

Microsoft team highlights 2 main application areas. The first is scientific and mathematical reasoning over visual inputs, including handwritten equations, diagrams, charts, tables, and quantitative documents. The second is computer-use agent tasks, where the model interprets screen content, localizes GUI elements, and supports interaction with desktop, web, or mobile interfaces.

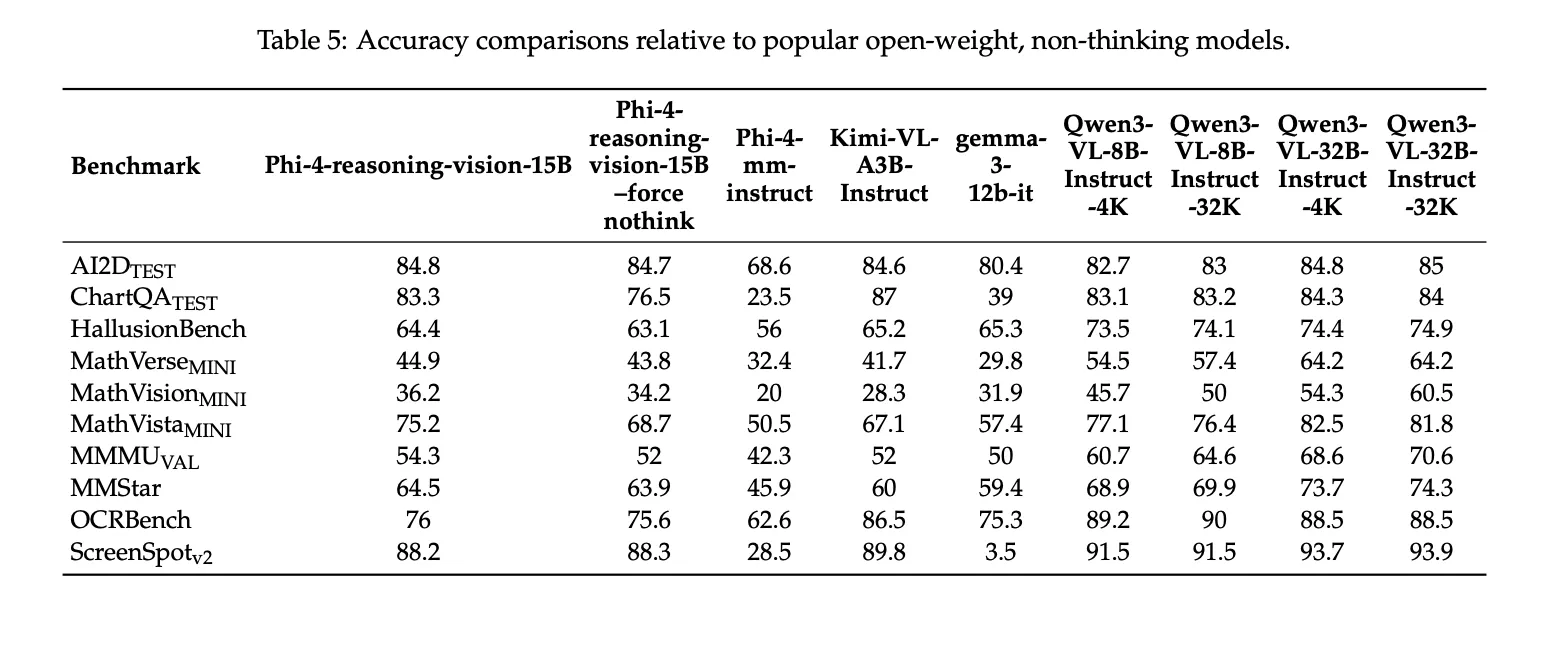

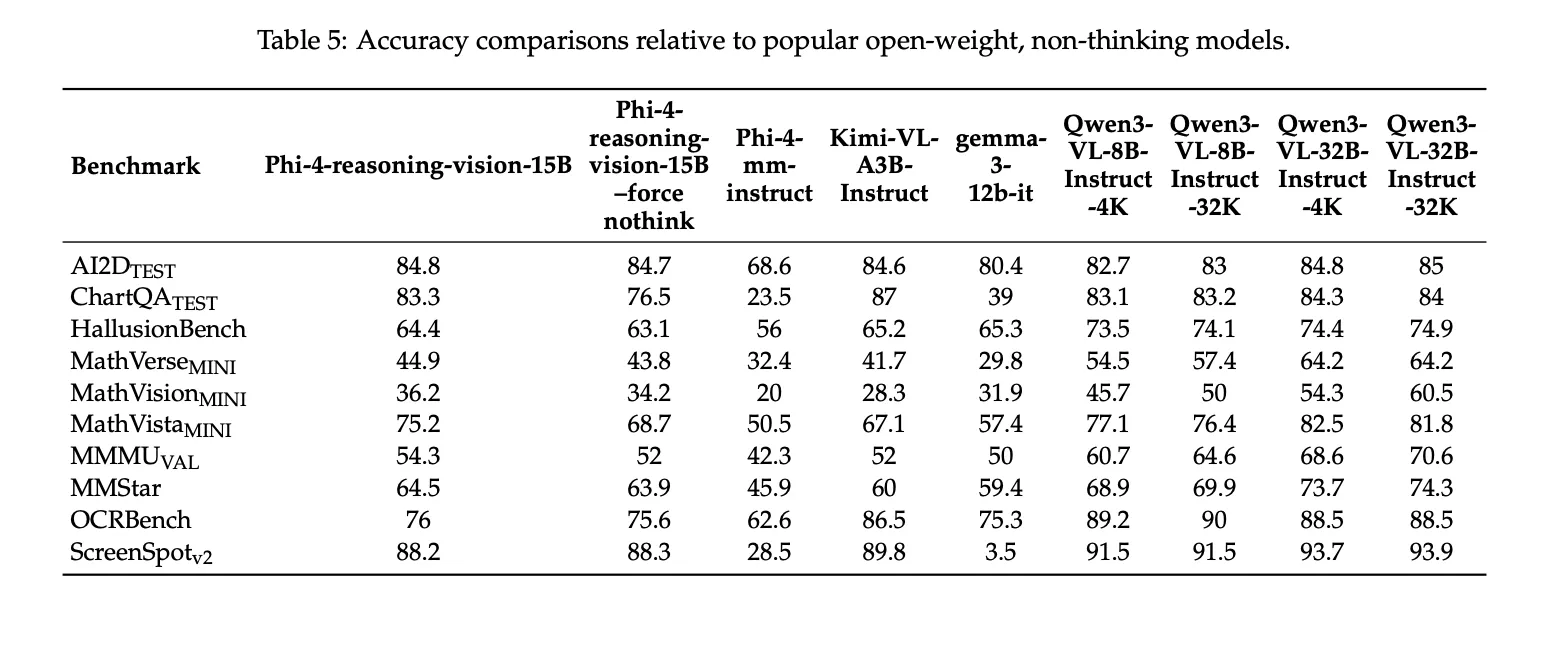

Benchmark results

Microsoft team reports the following benchmark scores for Phi-4-reasoning-vision-15B: 84.8 on AI2DTEST, 83.3 on ChartQATEST, 44.9 on MathVerseMINI, 36.2 on MathVisionMINI, 75.2 on MathVistaMINI, 54.3 on MMMUVAL, 64.5 on MMStar, 76.0 on OCRBench, and 88.2 on ScreenSpotv2. The technical report also notes that these results were generated using Eureka ML Insights and VLMEvalKit, with fixed evaluation settings, and that Microsoft team presents them as comparison results rather than leaderboard claims.

Key Takeaways

- Phi-4-reasoning-vision-15B is a 15B open-weight multimodal model built by combining Phi-4-Reasoning with the SigLIP-2 vision encoder in a mid-fusion architecture.

- Microsoft team designed the model for compact multimodal reasoning, with a focus on math, science, document understanding, and GUI grounding, rather than scaling to a much larger parameter count.

- High-resolution visual perception is a core part of the system, with support for dynamic resolution encoding and up to 3,600 visual tokens, which helps on dense screenshots, documents, and interface-heavy tasks.

- The model uses mixed reasoning and non-reasoning training, allowing it to switch between

- Microsoft’s reported benchmarks show strong performance for its size, including results on AI2DTEST, ChartQATEST, MathVistaMINI, OCRBench, and ScreenSpotv2, which supports its positioning as a compact but capable vision-language reasoning model.

Check out the Paper, Repo and Model Weights. Also, feel free to follow us on Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.